Overview

Fire fighter and first responder training methods have remained virtually unchanged for decades despite the emergence of new technologies. This is due in large part to the learning curve associated with any new technology as well as the problem of relying on a complex system that is more prone to malfunction than the simple tools already used. Many advantages of new technology are currently being ignored for these two reasons. However, the tools we are developing would co-exist with and enhance existing training methods, rather than replace them.

Project Goals

The Augmented Reality Tools for Improved Training of First Responders project aims to increase the effectiveness of firefighter and first responder training by providing a real-time link between trainee responders and a trainer or trainee coordinator during practice scenarios. This link would consist of live point-of-view video and positional information streamed from responders wearing Google Glass to a server. This data would then be streamed back to all responders as well as being accessible to a coordinator through a web application.

Augmented Reality Tools for Improved Training of First Responder swill increase the effectiveness of firefighter and first-responder training in the following ways:

-

Provide a real-time link of position and point-of-view video, between responders, wearing Google Glass, and a trainer or trainee coordinator, during practice scenarios. This information increases the coordinators’ situational awareness by allowing them to monitor the activity of the responders precisely from an overhead view or from the perspective of any member of the response team.

-

Provide responders with training scenario information through a hands-free, heads-up display: This would let the responders visualize the path they have followed and allow for display of virtual beacons that indicate important positions or objects in the environment. It would also allow the coordinators to offer real-time guidance, as needed, for example, prompting the responder with the recommended search pattern. Coordinators could also modify the training scenario at any point to introduce appropriate challenges.

-

Provide a valuable assessment and debriefing tool: The entire training scenario would be recorded for use in assessment of the responders' performance and for debriefing. This would better allow for identification and correction of any weaknesses. Responders would also be able to review their own performance and improve on it.

Features

The following is a list of features that are being appraised for integration in the pilot stage.

Coordinator/Trainer Tool

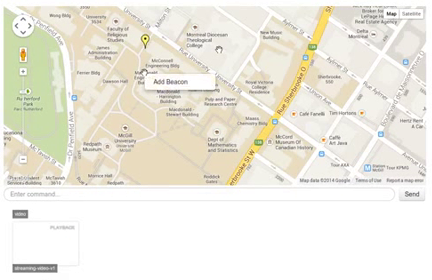

The coordinator or trainer is given a web application as interface to the system. This HTML5 tool aims to be compatible across browsers and requires no additional browser plugins.

The primary view shows an overhead map of the mission area along with a list of thumbnails showing live video streams from each responder. Responders' positions are indicated, as are any markers that have been placed. These markers are used to indicate important positions, for example: ingress/egress points, injured victims, obstructions or hazards. They can be placed by the coordinator through this web app or by responders on the scene.

Responder Tool

The responder is equipped with a wearable device that enables video recording, positional information, a heads-up display and a wireless network connection. The hardware currently being used is the Google Glass, as it is the most cost-effective platform with these capabilities. The display can be toggled between two different views: an augmented reality (AR) view and an overhead view.

Augmented Reality View

This view would present the responder with a visual representation of scenario information overlaid on their camera's video input. This information could consist of, for example, the positions of other responders, the position of markers and the path the responder has followed so far (‘breadcrumbs’).

An depiction of the responder augmented view

Legend:Responder

Marker

Breadcrumbs

Overhead View

This view would show the scenario information relative to the responder from an overhead, or ‘radar,’ perspective. These would be overlaid on a background of the building schematics, where available. Otherwise an exterior map view (ex: Google Maps) would be used.

Responder

Marker

Breadcrumbs