In augmented reality environment, traditional input interface such as the keyboard-mouse combination is no longer adequate. Since gestural language has always been an important aspect of human interaction, the development of a hand gesture system is highly advantageous for augmented environments. In many earlier hand gesture systems, hand tracking and pose recognition are achieved with the assistance of specialized devices (e.g., data glove, markers, etc.). Although they provide accurate tracking and shape information, they are too cumbersome for use over extended periods. We are researching in more suitable alternative by employing computer vision techniques to perform hand tracking and gesture recognition. Our prototype assumes a digital desktop environment where the video camera has a decent view of the users palm.

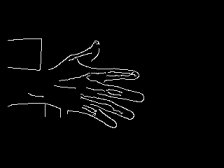

A skin-color model stored in the chromatic color space is used to extract the skin region from the image captured by a camera. The largest blob from the segmented image will be considered as the hand region. Then, the boundary of the hand is extracted for further processing.

|

|

|

|

|

| Normal video image with user's hand. | Detected skin region. | Extracted hand boundary. |

We are currently experimenting with a segmentation method using our multiple object tracker (by François Cayouette) based on motion and edge detection. In summary, the tracker will apply an edge detection on the current video frame and remove all edges corresponded to the background. It also relies on motion detection to locate the current object of interest in the scenes.

|

|

|

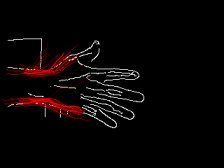

With only the foreground edges, we developped a wrist tracker based on the particle filter (also known as the CONDENSATION algorithm) to track the wrist position, orientation and size. The next image shows the observation model that we use to detect edge features corresponding to a wrist from the edge image. The two blue curves in the image approximate the edge/shape of the wrist. Since it is for certain that the shape of the user's wrist would slightly differ from the model, we include a deviation error for detecting the actual edge feature, which is shown by the yellow segments.

|

| wrist model |

The input to the wrist tracker is the edge image with the foreground objects. Then, the tracker will make a certain amount of observations generated from the prior probabilistic model. The red lines in the next image indicates the most probable location, orientation and size of the wrist.

|

|

|

| Input image to the tracker. | Red lines indicate the most probable wrist location. |

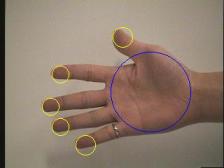

Since the number and the position of the fingers provide important information related to the shape of the hand, the first step in our hand gesture recognition is to find the position of the fingertips. The current approach is to exploitthe semi-circular shape of the top of the fingers to locate the fingertips.

The extremity of a fingertip could be modeled by a circular arc, so the fingertips can be located by looking for circles in the hand boundary image. We estimate the fingertips' radius from the size of the palm. We apply a circular Hough transform to the hand boundary image to detect circular patterns, and the real fingertip location will most likely get a strong response in the Hough image. Then, we run several tests on the Hough image to filter out false positives and duplicate detection.

|

|

|

|

|

last updated on 27/9/2004 by Siu Chi Chan