Mosaicing is largely dependent on the quality of registration among the constituent input images. Parallax and object motion present challenges to image registration, leading to artifacts in the result. To reduce the impact of these artifacts, traditional image mosaicing approaches often impose planar scene constraints, or rely on purely rotational camera motion or dense sampling. However, these requirements are often impractical or fail to address the needs of all applications. Instead, taking advantage of depth cues and a smooth transition criterion, we achieve significantly improved mosaicing results for static scenes, coping effectively with non-trivial parallax in the input. We extend this approach to the synthesis of dynamic video mosaics, incorporating foreground/background segmentation and a consistent motion perception criterion. Although further additions are required to cope with unconstrained object motion, our algorithm can synthesize perceptually convincing output, conveying the same appearance of motion as seen in the input sequences.

The work above evolved from our initial approach to overcoming parallax-related problems, inspired by manifold techniques that use dense sampling of the environment to build multi-perspective projection panoramas. As a small number of cameras in fixed positions cannot themselves provide such a sampling, we turn to synthesis as an alternative. Starting from a pair of stereo cameras with a large baseline, along with their calibration parameters, we synthesize a set of virtual images taken from intermediate positions to compensate for the limited sampling rate. These virtual frames decrease the effective baseline distance and in turn, minimize disparity effects between adjacent images, either real or virtual, resulting in substantially improved mosaics.

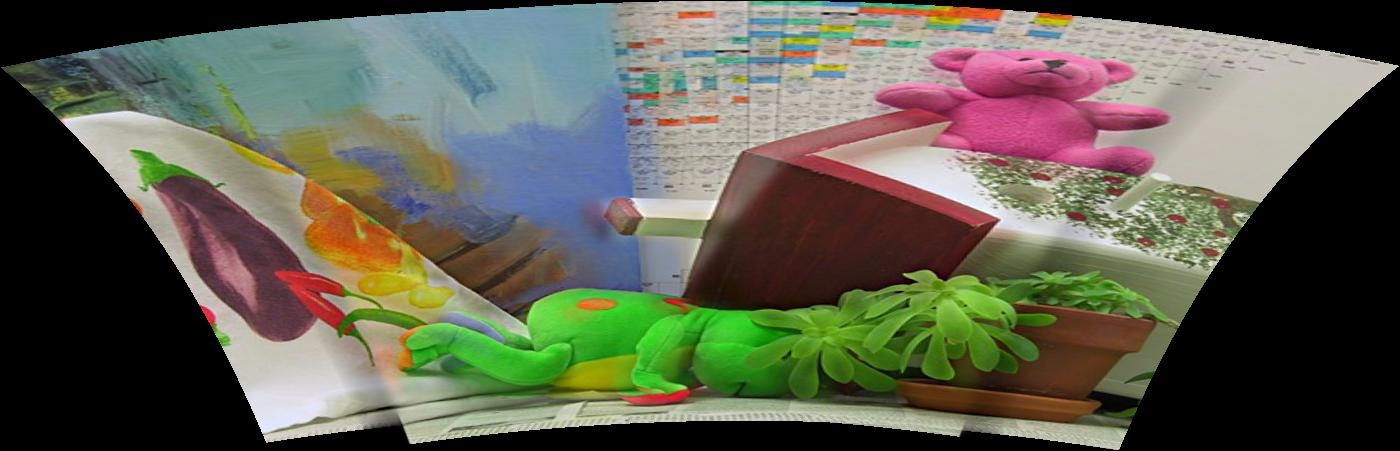

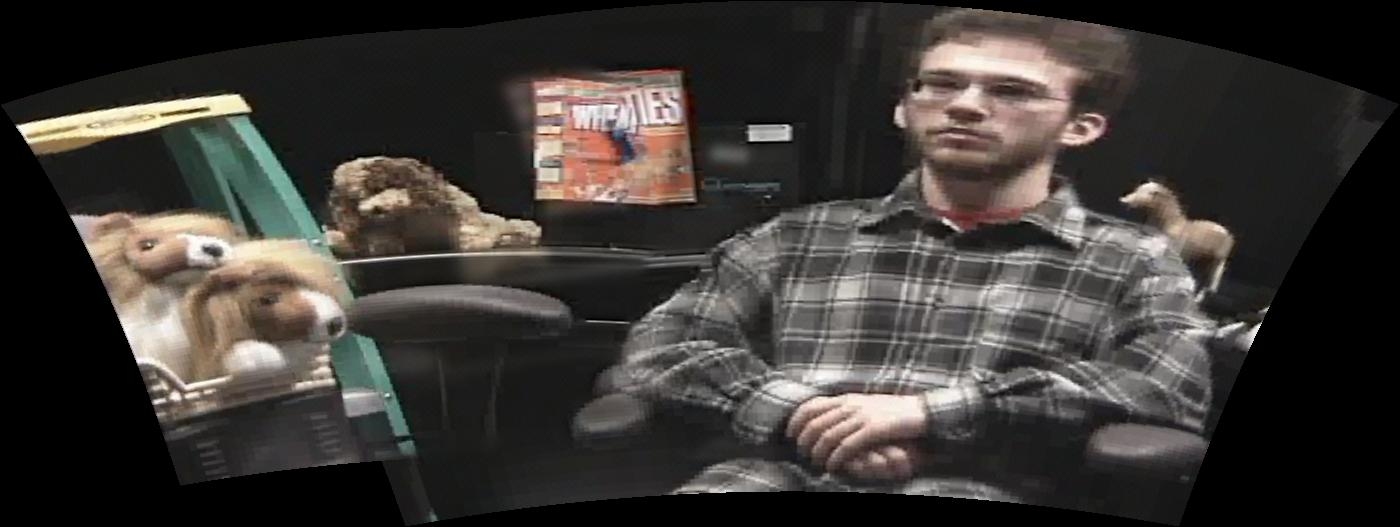

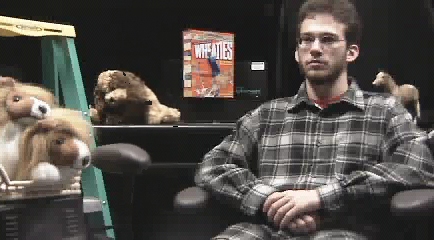

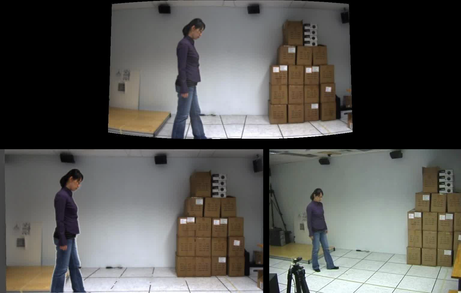

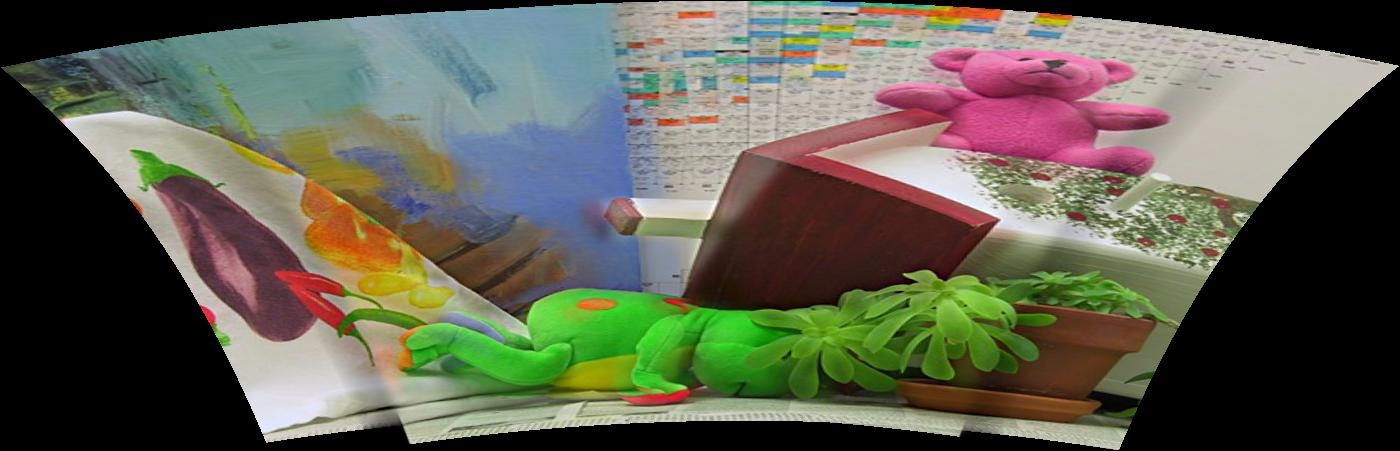

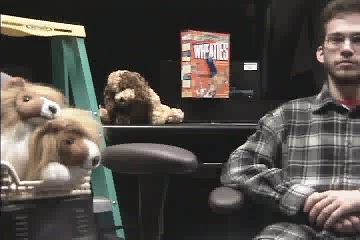

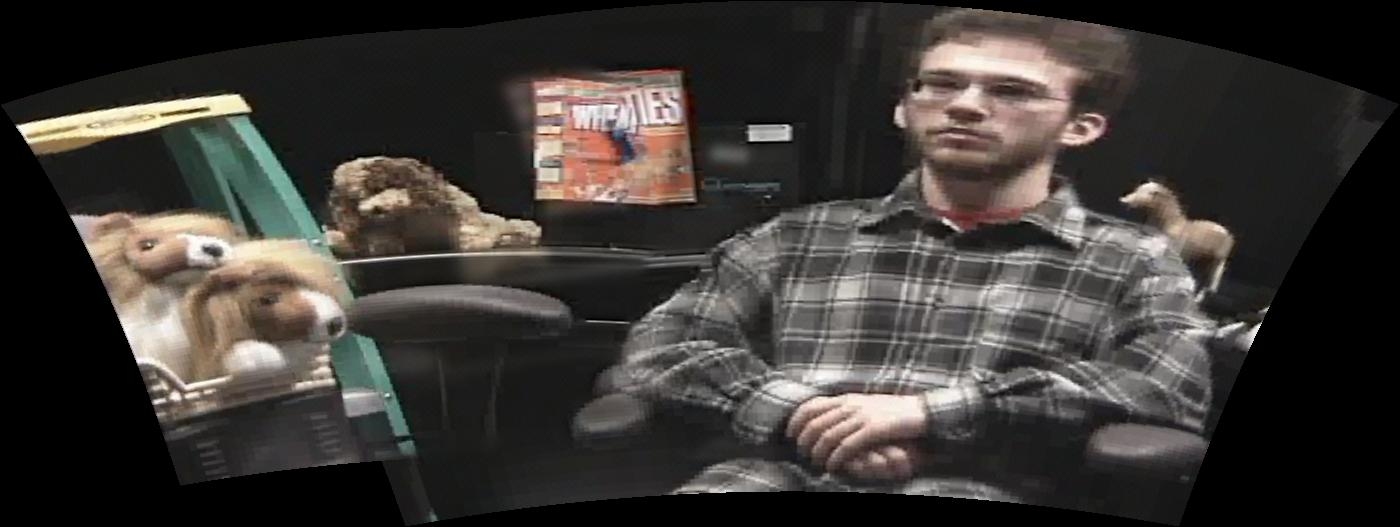

|

+ |

| = |

|

|

|

+ |

| = |

|

|

|

+ |

| = |

|

|

| left image | right image | Autostitch result | virtual dense mosaic result |

Last update: 5 November 2009