This research is being undertaken as part of an NSERC/MEDTEQ collaborative research and development project with industrial partners iMD Research and and IA Précision Santé Mentale.

Treating auditory hallucinations has traditionally required multiple trials of antipsychotic medications. However, approximately one in three patients with schizophrenia is resistant to antipsychotic therapies (Barnes et al, 2003). Cognitive Behavioral Therapy (CBT) is a non-pharmacological treatment that is used in schizophrenia to reduce positive and negative symptoms (Zimmerman et al., 2005) of the disease. Recently, a CBT-inspired approach called Avatar Therapy (Leff et al., 2014) has been developed and shown to effectively reduce the distress and helplessness associated with auditory hallucinations.

In using principles of CBT, Avatar Therapy (AT) safely exposes patients with auditory hallucinations to an emulation of the most distressing voice they hear. Recent research on AT (Delazizzo et al., 2018; Percy du Sert et al., 2018) has shown that results are enhanced when patients are exposed to a 3D avatar in a Virtual Reality (VR) environment. However, the promising qualities of non-pharmacological treatments of hallucinations using 3D VR technology are rivalled by the large scientific and technological open questions that remain.

In this project, we are designing a VR environment to address a number of open questions. Foremost, a large fraction of patients with schizophrenia hear more than one voice. Current AT models have focused on isolating a single voice that would be characterized by the patient as being the "most distressing". Given that many patients hear multiple voices, we plan to design a system that could expose patients to multiple voices sequentially or concomitantly. Furthermore, while it is well known that close to 60 percent of patients with schizophrenia have auditory hallucinations, roughly one quarter of those patients also have visual hallucinations (Llorca et al, 2016). Indeed, recent findings suggest that visual hallucinations are less frequent than olfactory/gustatory and kinesthetic hallucinations in schizophrenia (Llorca et al., 2016). Hence, we will build successive prototypes to quantify the benefit of additional forms of immersion on a continuum. We will begin by examining the net effect of adding graphical representation (2D and immersive 3D) to audio-only (spatialized and non-spatialized) versions of avatar therapy. Given that current evidence suggests that increases in sensory immersion is positively associated with increased effectiveness of avatar therapy (Delazizzo et al., 2018; Percy du Sert et al., 2018), we quantify the added benefit of supplemental visual representations (2D and immersive 3D), to adding haptics and to the combination of spatialized-audio with immersive 3D VR, together with haptics.

The prototypes we are developing will be evaluated in small clinical settings to validate the functionality of the technological features developed. Ultimately, we plan to build and validate a prototype that will be able to render multiple spatialized voices, offer 3D VR visual representations and haptic senssations. The resulting prototype will be able to selectively activate or deactivate any subset of these three modalities.

While self-report is commonly used as a means of assessing therapeutic effectiveness, we aim to provide a more objective metric of the different conditions studied by employing analyses of recorded bio-signals from the patients, in particular, by examining the influence on physiological parameters associated with stress.

Our present avatar creation pipeline consists of three phases: graphical exploration through a GAN-based image map, voice transformation to a chosen approximation of the sound of the patient's hallucination, and mapping of the graphical avatar to a 3D model for animation control.

| graphical avatar creation (ICMLA paper) | voice matching (CHI paper) | 3D avatar animation |

Given that current evidence suggests that increases in sensory immersion is positively associated with increased effectiveness of avatar therapy (Delazizzo et al., 2018; Percy du Sert et al., 2018), we will quantify the added benefit of supplemental visual representations (2D and immersive 3D), to adding haptics and to the combination of spatialized-audio with immersive 3D VR, together with haptics.

For our haptic actuation mechanism, we are developing a set of pneumatically actuated finger-style devices that can deliver force sensations evocative of human touch.

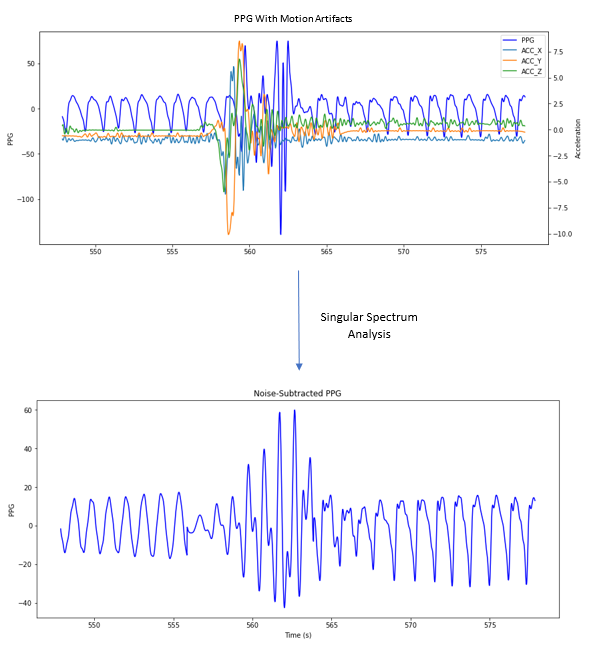

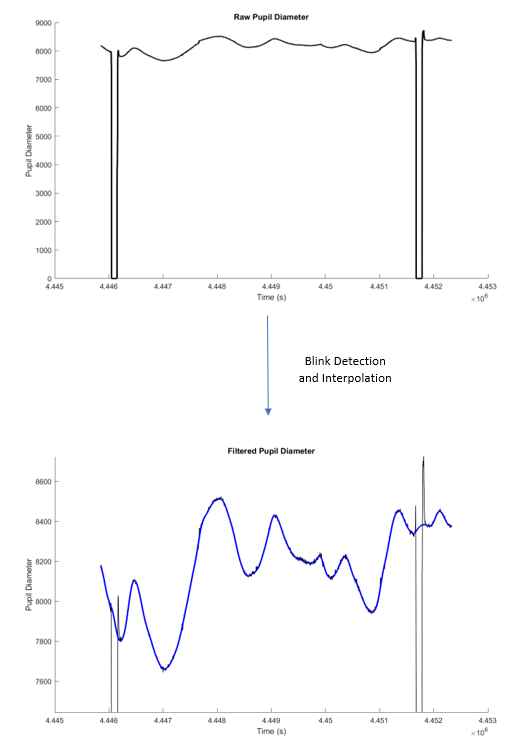

We have started the groundwork for physiological signal analysis, processing PPG data to extract HRV, and filtering out blink activity from an eye-tracker so as to obtain more stable retina width measurements as a potential avenue toward evaluating cognitive load or engagement with the avatar. Examples of this signal processing are provided below.

Filtering motion artifacts from PPG data, in preparation for potential characterization of schizophrenic hallucinations. A decrease in high-frequency power of heart rate variability is known to correlate with such episodes.

Filtering motion artifacts from PPG data, in preparation for potential characterization of schizophrenic hallucinations. A decrease in high-frequency power of heart rate variability is known to correlate with such episodes.

Filtering blink activity from pupil diameter measurements, in preparation for ocular biomarker detection.

Filtering blink activity from pupil diameter measurements, in preparation for ocular biomarker detection.