HapticGen

Tool Summary

| Metadata | |

|---|---|

| Release Yearⓘ The year a tool was first publicly released or discussed in an academic paper. | 2025 |

| Platformⓘ The OS or software framework needed to run the tool. | Python |

| Availabilityⓘ If the tool can be obtained by the public. | Available |

| Licenseⓘ Tye type of license applied to the tool. | Open Source (MIT) |

| Venueⓘ The venue(s) for publications. | ACM CHI |

| Intended Use Caseⓘ The primary purposes for which the tool was developed. | Prototyping |

| Hardware Information | |

|---|---|

| Categoryⓘ The general types of haptic output devices controlled by the tool. | Vibrotactile |

| Abstractionⓘ How broad the type of hardware support is for a tool.

| Consumer |

| Device Namesⓘ The hardware supported by the tool. This may be incomplete. | Meta Quest |

| Device Templateⓘ Whether support can be easily extended to new types of devices. | No |

| Body Positionⓘ Parts of the body where stimuli are felt, if the tool explicitly shows this. | N/A |

| Interaction Information | |

|---|---|

| Driving Featureⓘ If haptic content is controlled over time, by other actions, or both. | Time |

| Effect Localizationⓘ How the desired location of stimuli is mapped to the device.

| Device-centric |

| Non-Haptic Mediaⓘ Support for non-haptic media in the workspace, even if just to aid in manual synchronization. | None |

| Iterative Playbackⓘ If haptic effects can be played back from the tool to aid in the design process. | Yes |

| Design Approachesⓘ Broadly, the methods available to create a desired effect.

| Procedural, Description |

| UI Metaphorsⓘ Common UI metaphors that define how a user interacts with a tool.

| None |

| Storageⓘ How data is stored for import/export or internally to the software. | WAV |

| Connectivityⓘ How the tool can be extended to support new data, devices, and software. | None |

Additional Information

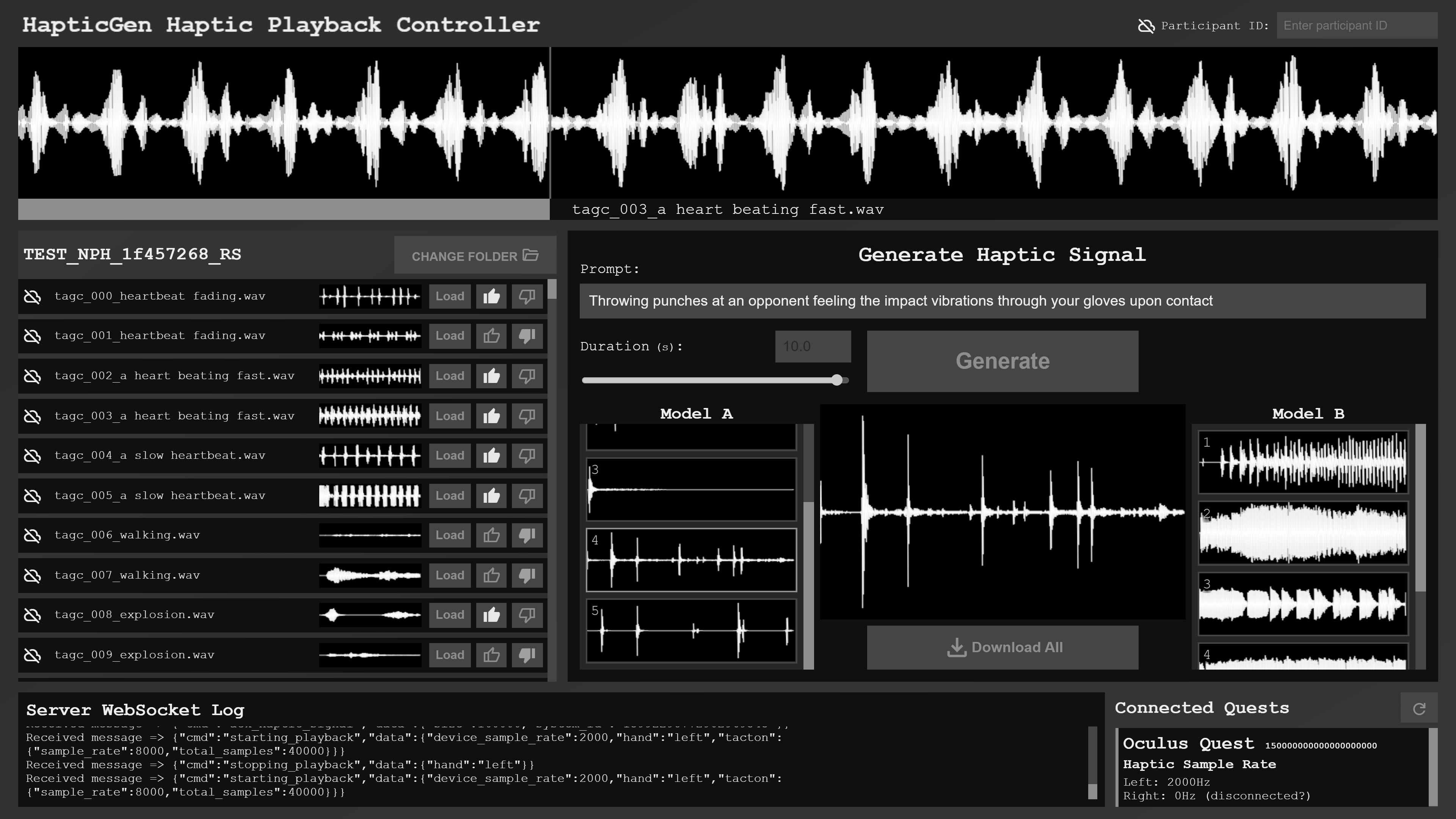

HapticGen uses a modified version of Meta’s AudioCraft project to generate haptic waveforms from user-specified prompts. The AudioCraft model was fine-tuned using a haptic dataset created automatically from an existing text-to-audio dataset that was then curated by expert participants. In the design interface, the duration of a desired effect can be specified by the user, but other aspects of it are left entirely to the model.

For more information, consult the CHI’25 paper and the GitHub repository.