ViviTouch

Tool Summary

| Metadata | |

|---|---|

| Release Yearⓘ The year a tool was first publicly released or discussed in an academic paper. | 2014 |

| Platformⓘ The OS or software framework needed to run the tool. | Unknown |

| Availabilityⓘ If the tool can be obtained by the public. | Unavailable |

| Licenseⓘ Tye type of license applied to the tool. | Unknown |

| Venueⓘ The venue(s) for publications. | IEEE Haptics Symposium |

| Intended Use Caseⓘ The primary purposes for which the tool was developed. | Haptic Augmentation, Prototyping |

| Hardware Information | |

|---|---|

| Categoryⓘ The general types of haptic output devices controlled by the tool. | Vibrotactile |

| Abstractionⓘ How broad the type of hardware support is for a tool.

| Class |

| Device Namesⓘ The hardware supported by the tool. This may be incomplete. | Voice Coil |

| Device Templateⓘ Whether support can be easily extended to new types of devices. | No |

| Body Positionⓘ Parts of the body where stimuli are felt, if the tool explicitly shows this. | N/A |

| Interaction Information | |

|---|---|

| Driving Featureⓘ If haptic content is controlled over time, by other actions, or both. | Time |

| Effect Localizationⓘ How the desired location of stimuli is mapped to the device.

| Device-centric |

| Non-Haptic Mediaⓘ Support for non-haptic media in the workspace, even if just to aid in manual synchronization. | Audio, Visual |

| Iterative Playbackⓘ If haptic effects can be played back from the tool to aid in the design process. | Yes |

| Design Approachesⓘ Broadly, the methods available to create a desired effect.

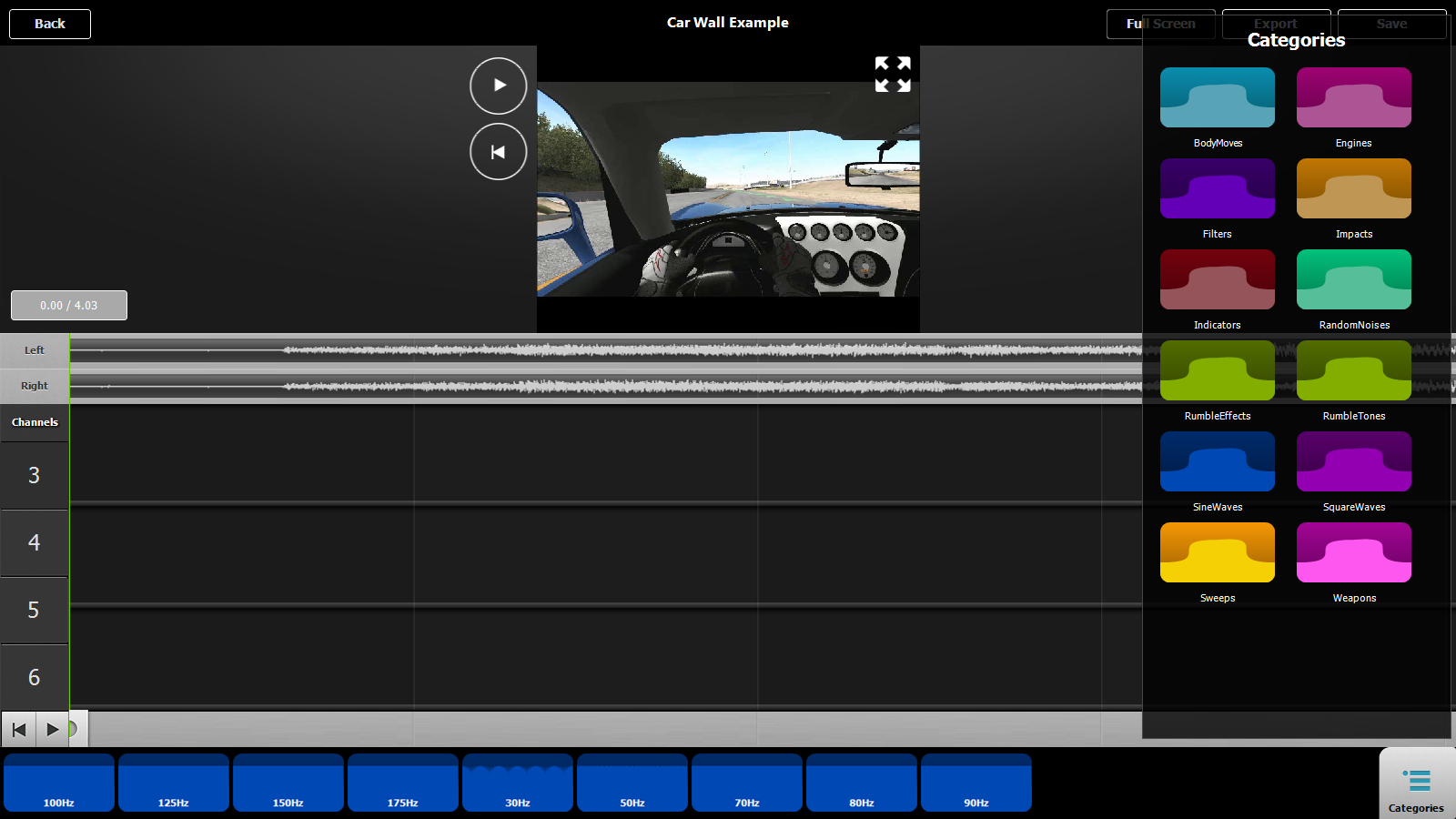

| Direct, Procedural, Additive, Library |

| UI Metaphorsⓘ Common UI metaphors that define how a user interacts with a tool.

| Track, Keyframe |

| Storageⓘ How data is stored for import/export or internally to the software. | MKV, Custom XML |

| Connectivityⓘ How the tool can be extended to support new data, devices, and software. | None |

Additional Information

Vivitouch is meant to support prototyping of vibrotactile haptics aligned to audio-visual content. Haptic media is created through the use of waveforms and filters mapping the audio content at that moment of time to the vibrotactile channel. These filters, such as a low-pass filter, are meant to aid in synchronizing audio and haptic content. Effects and filters are assigned to different output channels, representing each actuator, and to different haptic tracks. Using multiple tracks allows for layering effects and filters on the same actuator at the same time.

For more information, consult the 2014 World Haptics Conference paper.